Why can I do Live Classification but run out of RAM when running the Impulse on device

- Project ID: 134024

- The Sony Spresense microcontroller board has 1.5 MB of RAM.

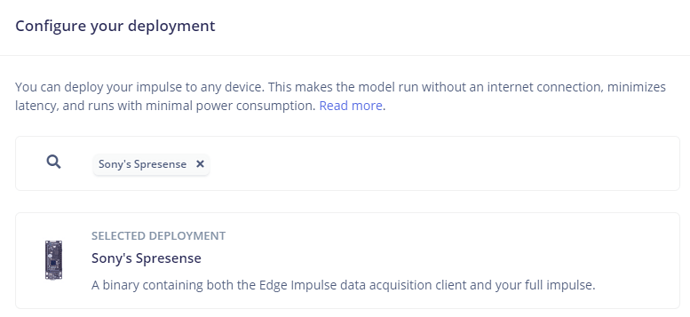

- The Deployment page shows 280.8K RAM usage.

- The device is running the default firmware:

I can do EI Studio Live Classification via either

- WebUSB

edge-impulse-daemon

edge-impulse-run-impulse

Results in:

Inferencing settings:

Image resolution: 96x96

Frame size: 9216

No. of classes: 3

Starting inferencing in 2 seconds...

Taking photo...

ERR: failed to allocate tensor arena

Failed to allocate TFLite arena (error code 1)

ERR: Failed to run impulse (-6)

edge-impulse-run-impulse --debug

Hangs at:

[SER] Started inferencing, press CTRL+C to stop...

Hi @MMarcial ,

I’ll have a look, thx for reporting.

1 Like

I have investigated a bit and the model, with the selected architecture, is too big to fit in RAM.

I have tried to lower the stream buffer but it’s not enough.

Did you try with other architectures ?

@ei_francesco

By lower the stream buffer do you mean you reduced the Impulse Input Block from say 96x96 to 16x16 or 3x3 or 1x1 or something like this?

-

Try a new architecture?

- As in try another MCU, aka, not a Sony Spresense?

- Is the Spresense no longer an EdgeImpulse Fully Supported board?

-

When selecting Sony's Spresense from the EI Studio Deployment page shows total RAM for the Impulse to be estimated at 280.8K (Kelvin or lower case ‘k’ for kilo?). How does the ready-to-go firmware, default firmware, firmware bundle, firmware source code, pre-compiled firmware, or whatever it is called now not fit into the Spresense’s 1,500,000 byte RAM space?

- This is the

ready-to-go firmware. It is EI code. None of my code is inflating the code. How can it not fit into this ginormous RAM?

-

I re-compiled one of my custom Arduino apps that used an older version of the deployed Impulse as an Arduino library. The files were dated April 4th, 2023 so I assume that’s when I deployed the library from the Studio.

- Therefore, I believe the behind-the-scences code that implements the

ready-to-go firmware has changed and is now longer useable.

Please advise…

Hi @MMarcial ,

By lower the stream buffer do you mean you reduced the Impulse Input Block from say 96x96 to 16x16 or 3x3 or 1x1 or something like this? => no, there is a buffer which is used when streaming the camera, which can be pretty big.

By Architecture I mean the model architecture, like using FOMO.

The code does fit, what it doesn’t fit is the Tensor for this model, which is dynamically allocated.

We updated the Spresense-SDK, could be this lead to an increase of RAM usage, I didn’t check.

I am checking if we can lower it in the base firmware.

regards,

fdv

We recently merged new feature for the Spresense (support for 48kHz audio, support for the Commonsense board) plus an update on the Spresense SDK that probably has lowered the use of RAM.