Project ID: 191005

Background:

I am very new at machine learning and I am trying to explore a method to detect the position of poachers at a friend place by analyzing audio signals from the shots, so I would kindly ask some general input from the community.

The scope of the ML algorythm is to detect/recognise 2 types of very short events among the background noise in continuos inference mode:

- Bullet shockwave signal

- Muzzle blast signal

Trilateration/triangulation methods are out of scope of this thread.

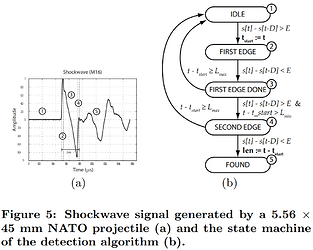

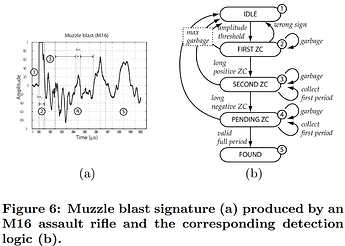

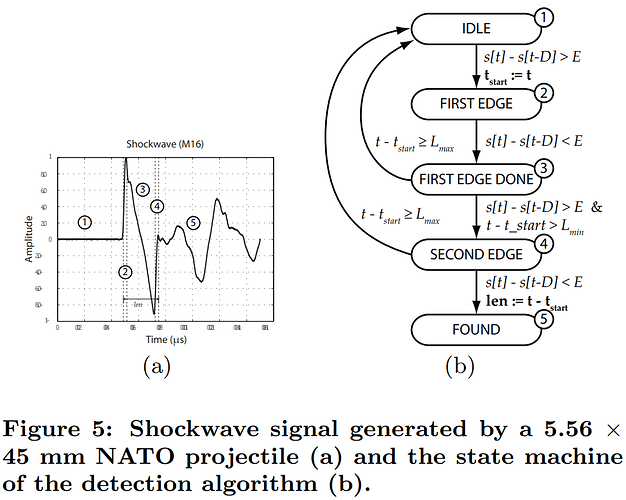

The waves should look like Figures 5 and 6 according to the the paper Shooter localization and weapon classification with soldier-wearable networked sensors:

Citing the paper:

The most conspicuous characteristics of an acoustic shockwave…are the steep rising edges at the beginning and end of the signal. Also, the length of the N-wave is fairly predictable…and is relatively short (200-300 μs).

In contrast to shockwaves, the muzzle blast signatures are characterized by a long initial period (1-5 ms) where the first half period is significantly shorter than the second half [4].

…the real challenge for the matching detection core is to identify the first and second half periods properly.

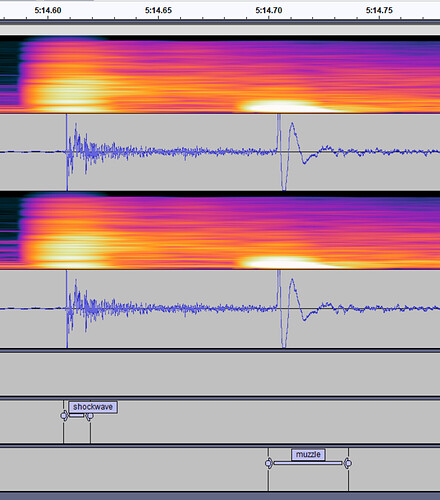

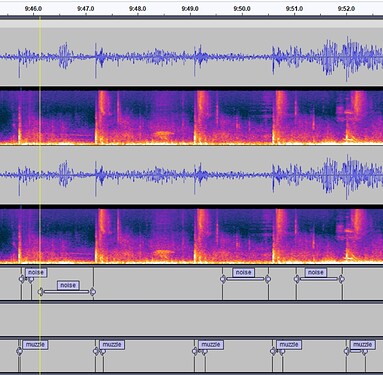

And indeed this is how (more or less) the waves look like in my data:

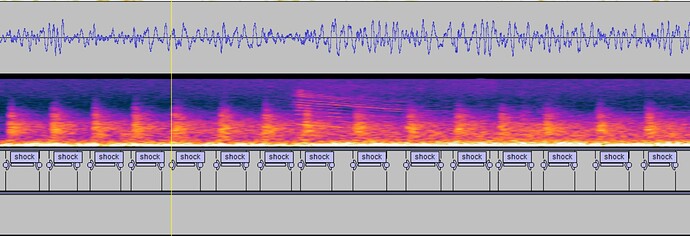

If bullet is subsonic there will be no shockwave, only muzzle blast:

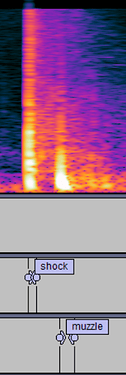

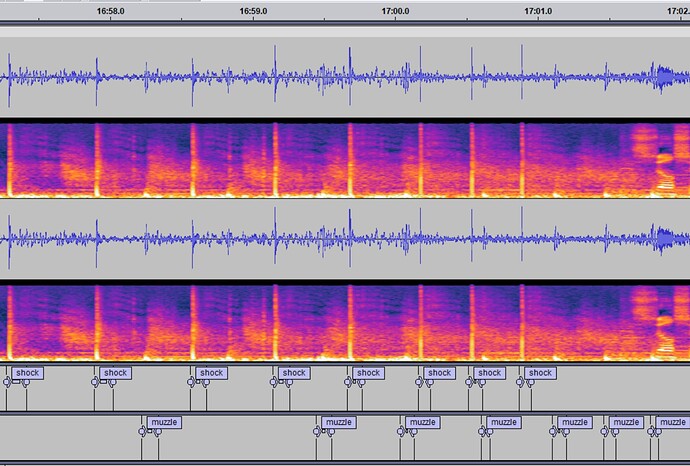

Viceversa, if the gun is silenced, there will be shockwave, but no muzzle blast (notice the horizontal lines, is the “bzzz” from the bullet when already at subsonic):

Unfortunately if a silenced gun is used along with subsonic ammunition, not much will be picked up.

Luckily poachers usually use homemade silencers on long rifles (so some muzzle blast will be heard) and they use supersonic ammunition, so a bullet shockwave should be heard.

What is important for me is the accuracy of the timestamp of the event and not so much how long the algorythm takes to recognize the event.

Meaning I need to know when exactly the event happened (within few milliseconds) but I don’t mind waiting many seconds for the answer (if possible within 5 seconds, but not critical).

Question: Will be the timestamp determined by the beginning of the window, end of the window or exact event (wave spike)? Forgive me if this seems like a silly/trivial question but have no idea and an error of milliseconds will later be equivalent to an error of meters in positioning.

Question: For the data, I extracted .wav files of 100 millisecond samples as average, is this correct (method is extracting files from the manual labels in previous images)?

Question: I also labeled as muzzle the sound of the weapon fired from close-by since technically is a muzzle blast, but to the eye it looks very different, will this induce errors?

Noise samples extracted are between 500ms and 1 second as average.

In total to start I have 110 samples for muzzle, 86 for shockwave and 90 for noise. Train / test split 80/20.

Questions: I obtained better results when I increase the window size to 60ms and window increase of 20ms compared to lower values of 20 and 8 for example.

What is the risk of increasing too much the window?

What will happen if in the same window there is a shockwave and a muzzle blast? Will I get both results or one will be masked? What to do in this case, take the overlapping sounds (they are not exactly in synch but actually shifted so it would be 2 different audios) and label them accordingly?

I am using the standard MFE processing block and the classifier learning block.

Question: Should I change the standard parameters in the MFE processing block for this scenario?

The classifier learning block runs also standard except I increased the training cycles to 300 and modified the learning rate to 0.0005.

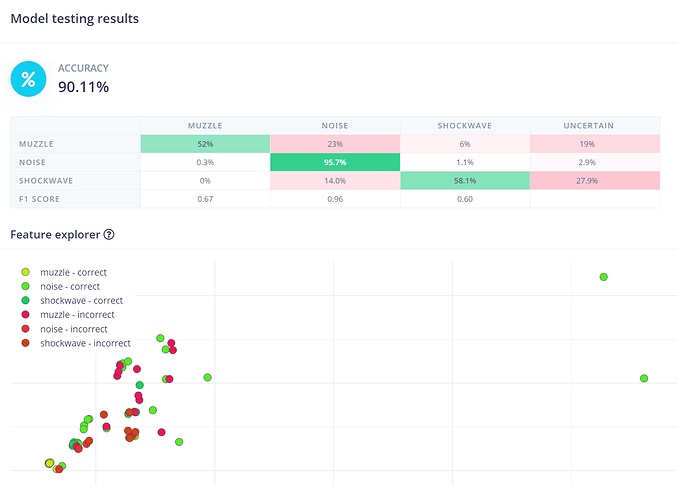

In the end I get 92.6% accuracy and 0.27 loss for the model. Looks good to me but maybe it is overfitting?

Testing the model with 64 samples gives an accuracy of 90.11%. Again, no idea if this is good enough:

The idea would be to run it on a Raspberry Pi Pico (RP2040) or some other low cost-device.

Any input would be highly appreciated.