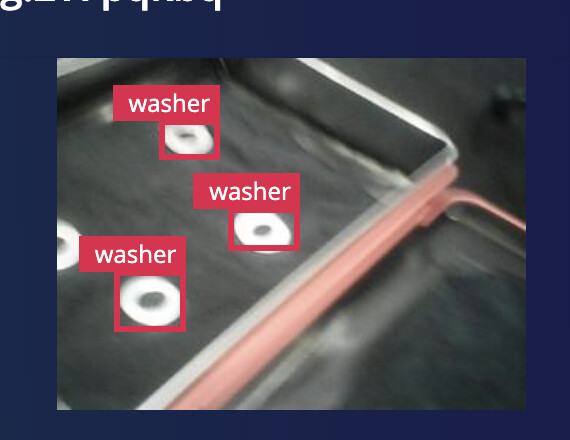

I have a FOMO model that is too sensitive, so I am messing around with the object_weight (default 100) setting in the advanced mode setting. Note the background setting is 1.0 compared to the default object_weight setting of 100.

In the code below I set the object_weight to 5 to try to make the background reading a little stronger and get a few less false positives. Does anyone have any experience with this. I will know soon if 5 was a good setting, but does anyone else have any suggestions.

model = train(num_classes=classes,

learning_rate=LEARNING_RATE,

num_epochs=EPOCHS,

alpha=0.35,

object_weight=5,

train_dataset=train_dataset,

validation_dataset=validation_dataset,

best_model_path=BEST_MODEL_PATH,

input_shape=MODEL_INPUT_SHAPE,

lr_finder=False)

…

A few minutes later…

It doesn’t seem to be doing anything dramatically different. Possibly getting a few more background classifications over other FOMO objects but not really obvious. object_weight is an integer so the lowest I guess that I can go is 1.