One of my models is used to classify sounds of a machine.

The condition of the machine only bescomes visible when it is loaded, thus when it runs without load, readings are alomst the same, and the classification results are not that usefull.

Im thinking to perhaps just find a filter or averageing algorithm to process the result, or a statemachine and some counters, or maybe som other kind if tresholding.

Are there any sources of inspiration for classifier post-processing, or how may i process my features (spectrograms from MFE block) enough to be able to use the for anomaly detection perhaps ?

br

Opprud

Hi @morten_ece_au,

Right now, the Edge Impulse studio only supports anomaly detection for simple, 1D type of data (e.g. accelerometer). Detecting anomalies for audio and image data is something we hope to support in the future. Do you have the sounds of the unloaded machine in your dataset? If you added an “unloaded” class, that may be the best approach right now.

I took a peek at your project–I also recommend setting the noise floor of the MFE block to something like -100 instead of -52, as your data is pretty quiet. The noise floor setting removes any sounds less than a certain dB level.

For classifier post-processing, I recommend checking out the last section of my course (https://www.coursera.org/learn/introduction-to-embedded-machine-learning) where I talk about a few ways to do such post-processing. It’s free to sign up, and you can just skip to the videos you need.

To find the features (e.g. spectrograms), I recommend downloading the C++ library in your project (after training a model). In there, you can find the run_classifier() function in edge-impulse-sdk/classifier/ei_run_classifier.h file. The features are placed in a features_matrix buffer. It’s fairly buried in the library’s source code, but it is possible to access that data.

Note that run_classifier() is called if you’re not doing a continuous sliding window across recorded data for inference. If you are, then run_classifier_continuous() is likely called instead. That function uses static_features_matrix to store the features instead.

Hope that helps!

1 Like

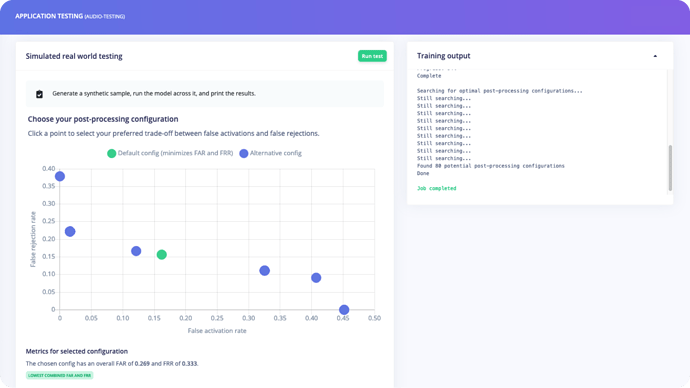

Hi @morten_ece_au Sidenote: we’re actually adding post-processing to Edge Impulse (currently in private alpha with some customers, but soon for everyone). It takes things like averaging, noise gates, sensitivity etc. into account, and runs a genetic algorithm to find the most promising options. See below for a variety of models found (each with a differnt False Reject Rate vs. False Activation Rate) so you can pick the one that covers your needs best.

Thanks Shawn & Jan, usefull video, and info.

Looking forward to try out the post processing feaures you are working in public, or beta

1 Like