I have an ESP32CAM running inference with the click of a button at the local ip address, but what I want to do is run the inference automatically every 2 seconds for a commercial product. How would I go about doing this?

This is the code I’m using example-esp32-cam/Examples/Advanced-Image-Classification at main · edgeimpulse/example-esp32-cam · GitHub

I recommend checking out this example in that same repo: https://github.com/edgeimpulse/example-esp32-cam/blob/main/Examples/Inference-on-boot/Inference-on-boot.ino

You’d probably want to ignore the SD card stuff, but the idea is that in the main loop() function, you would capture an image from the camera, convert it to a 96x96 RGB888 image (to match the expected input of the EI library), and call run_classifier() with that image data (see the classify() function).

Hope that helps!

Thank you so much! Ill try this. Does this work for running object detection on the esp32 or just image classification? Also is there a way to go higher than 96x96? (like160x160) Would that be too much for the esp32 to handle? if so, would 96x96 be enough to detect an object in a small room?

Also does it need the html and cpp file like the other one has? or does it work as a standalone file?

Hi @shawnm1,

You should not need the html files for the example I listed, as it does not host a webpage.

I’m not sure if it’ll handle a 160x160 image. I think it should have enough memory, but note that in the demo code, you need to create multiple, full-image buffers when transforming the image (rescaling, grayscale to RGB). To get around this, you could do some of the transformation in the raw_feature_get_data() callback function. Your best bet would be to try it.

Ok thanks! Would it work with object detection rather than image classification?

The only object detection that I believe will fit on the ESP32 is FOMO. The other models are too large if I recall.

But FOMO will work with this code?

It should work with FOMO, but you will need to change what you do with the inference information, as it’s now bounding boxes instead of classification results. For example:

printf("Object detection bounding boxes:\r\n");

for (uint32_t i = 0; i < EI_CLASSIFIER_OBJECT_DETECTION_COUNT; i++) {

ei_impulse_result_bounding_box_t bb = result.bounding_boxes[i];

if (bb.value == 0) {

continue;

}

printf(" %s (%f) [ x: %u, y: %u, width: %u, height: %u ]\r\n",

bb.label,

bb.value,

bb.x,

bb.y,

bb.width,

bb.height);

}

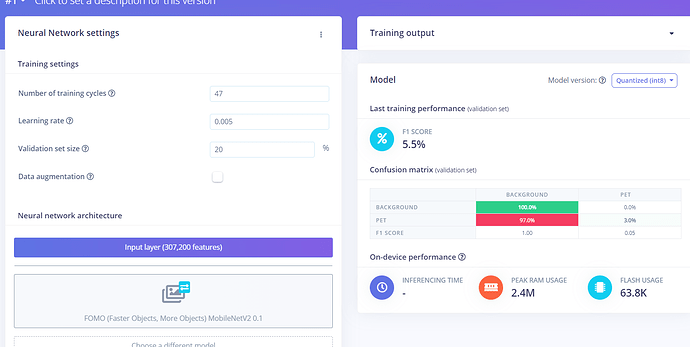

ok Thank you s much for the help! although I do have one more issue. I hand labeled over 3000 images and yet when I trained the FOMO model, this is what happened.

Obviously this is terrible and I cant use this. I’m not sure if what’s going on is my fault or if there’s some software error but something seems to be going wrong. Would you mind taking a look at it? I could add you as a collaborator to the project if you’d like. Thanks

Thank you. Here’s my project ID 116192

Hello @shawnm1,

I just had a quick look at your dataset and it seems that your objects (your pets) in your image take a large portion of the frame. This is not the ideal use case for FOMO because the model will only be trained on one small “cell” around the center of the image.

If you want to understand more, here is a talk I gave to explain that (around min 15:30): AI Tech Talk from Edge Impulse: How to run object detection on Arm Cortex-M7 processors - YouTube

Also I noted that your model is trained on 320x320 images which is probably too big to fit on the esp32.

Best regards,

Louis

Thanks for letting me know. Ill change the resolution. is there some code that could be run on the developer side of things that compares the size of the bounding box to the size of the image and if the bounding box takes up more than 50% of the image, delete it? This way I don’t have to manually do it for all the images. if not, then that’s fine. I guess I’ll have a lot of work ahead of me