Project ID:

363328

Context/Use case:

I’m following the example RC project to deploy an audio classification model to my Nicla Voice board. All the steps are exactly the same, except that I modified the model in expert mode (specifically, I replaced the 2-cov model with a MobileNet V2 alpha 0.1 model). Here is my modified code block:

import tensorflow as tf

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import Dense, InputLayer, Dropout, Conv1D, Conv2D, Flatten, Reshape, MaxPooling1D, MaxPooling2D, AveragePooling2D, BatchNormalization, Permute, ReLU, Softmax

from tensorflow.keras.optimizers.legacy import Adam

import math, requests

from tensorflow.keras import Model

from tensorflow.keras.layers import (

Dense, InputLayer, Dropout, Conv1D, Flatten, Reshape, MaxPooling1D, BatchNormalization,

Conv2D, GlobalMaxPooling2D, Lambda, GlobalAveragePooling2D)

from tensorflow.keras.optimizers.legacy import Adam, Adadelta

from tensorflow.keras.losses import categorical_crossentropy

EPOCHS = args.epochs or 150

LEARNING_RATE = args.learning_rate or 0.002

# If True, non-deterministic functions (e.g. shuffling batches) are not used.

# This is False by default.

ENSURE_DETERMINISM = args.ensure_determinism

# this controls the batch size, or you can manipulate the tf.data.Dataset objects yourself

BATCH_SIZE = args.batch_size or 32

if not ENSURE_DETERMINISM:

train_dataset = train_dataset.shuffle(buffer_size=BATCH_SIZE*4)

train_dataset=train_dataset.batch(BATCH_SIZE, drop_remainder=False)

validation_dataset = validation_dataset.batch(BATCH_SIZE, drop_remainder=False)

channels = 1

columns = 40

rows = int(input_length / (columns * channels))

INPUT_SHAPE = (rows, columns, channels)

base_model = tf.keras.applications.MobileNetV2(

input_shape = INPUT_SHAPE, alpha=0.1,

weights = None

)

base_model.trainable = True

model = Sequential()

model.add(Reshape(INPUT_SHAPE, input_shape=(input_length, )))

model.add(Permute((2,1,3))) # H and W are reversed for NDP120 Conv 2D input

model.add(InputLayer(input_shape=INPUT_SHAPE, name='x_input'))

last_layer_index = -3

model.add(Model(inputs=base_model.inputs, outputs=base_model.layers[last_layer_index].output))

model.add(Reshape((-1, model.layers[-1].output.shape[3])))

model.add(Dense(8, activation='relu'))

model.add(Dropout(0.1))

model.add(Flatten())

model.add(Dense(classes, activation='softmax'))

# this controls the learning rate

opt = Adam(learning_rate=LEARNING_RATE, beta_1=0.9, beta_2=0.999)

callbacks.append(BatchLoggerCallback(BATCH_SIZE, train_sample_count, epochs=EPOCHS, ensure_determinism=ENSURE_DETERMINISM))

# # train the neural network

# model.compile(loss='categorical_crossentropy', optimizer=opt, metrics=['accuracy'])

# model.fit(train_dataset, epochs=EPOCHS, validation_data=validation_dataset, verbose=2, callbacks=callbacks)

model.compile(optimizer=tf.keras.optimizers.Adam(learning_rate=LEARNING_RATE),

loss='categorical_crossentropy',

metrics=['accuracy'])

model.fit(train_dataset, validation_data=validation_dataset, epochs=EPOCHS, verbose=2, callbacks=callbacks)

# Use this flag to disable per-channel quantization for a model.

# This can reduce RAM usage for convolutional models, but may have

# an impact on accuracy.

disable_per_channel_quantization = False

Error:

After training the model for 150 epochs, I got good precisions. However, when I tried to build the firmware, I got the error output below:

Creating job... OK (ID: 17614803)

Scheduling job in cluster...

Container image pulled!

Job started

Writing templates OK

Scheduling job in cluster...

Container image pulled!

Job started

Removing clutter...

Removing clutter OK

Copying output...

Copying output OK

Scheduling job in cluster...

Container image pulled!

Job started

generate_synpkg for NDP120

Extracting model...

Extracting model OK

Found Audio block

Loading neural network...

Loading neural network OK

Loading data set...

Loading data set OK, found 3232 items

Converting sequential model to functional API...

Reshape layer detected

(None, 40, 40, 1)

Skipping Permute layer

Reshape layer detected

(None, 4, 1280)

Traceback (most recent call last):

File "/app/generate_synpkg.py", line 206, in <module>

print('Reshape layer should contain 40 columns', e, flush=True)

NameError: name 'e' is not defined

Application exited with code 1

Job failed (see above)

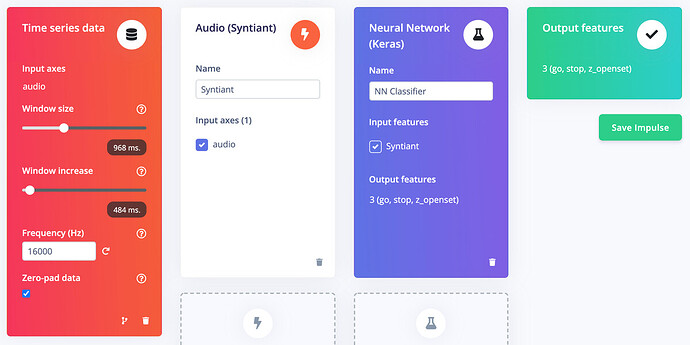

I’m not super familiar with Syntiant, but it seems to be coupled tightly with Edge Impulse. For example, I could not find the generate_synpkg.py file anywhere. In this case, I really wish to get help from you.

Guess:

I noticed that there is a comment in your expert mode of model code block: # Syntiant TDK supports only valid padding. I’m just guessing randomly, but could this be the reason for the error?