Re: the 0.99609 it’s because the output layer of the network is quantized, so the highest value is 255 and the quantization scale is 0.00390625 and 255*0.00390625=0.99609.

hi @janjongboom

well, I understand that. But my question is that no matter what kind of photos I take, the output is the same. It seems that the model does not work correctly as in edge impulse platform. So I don’t know where is the problem.

“No matter what kind of photos are taken, only the scores of two categories are changing. As shown in the figure below, the scores of dandelion & unknown are always zero. But when I save the photos and import them into the edge impulse project as testing dataset, there are scores for all four categories.”

Best,

Jifly

Hi @jifly,

Is it still the case with the advanced example ?

I could see the score varying in all categories.

Best,

Louis

hi @louis

I haven’t had time to check the latest demo. I’ll check it and update the results soon.

thank you for your great support.

Best,

Jifly

hi I’m trying the tutorial on github, for classification with esp32cam. I am trying to classify 3 items from the fashion mnist database. The model on EI is fine, but when I deploy it to the device, the inference is always 99% on the same label.

I tried to not quantize in the optimization choice but I get this:

" failed to allocate tensor arena

Failed to allocate TFLite arena (error code 1)

run_classifier returned: -6"

Any tips for me?

Hi @gransasso This is a memory allocation issue, we cannot allocate enough memory to run the neural network. Using a smaller transfer learning model / smaller neural network will resolve this.

@louis Can you not show any conclusions if run_classifier returns an error? That would help with perception.

Indeed the RAM usage for the unoptimized model is too big to fit on the ESP32 cam.

Let me try to run your model on my ESP32 if you don’t mind. I will see if I have the same issue where “the inference is always 99% on the same label.”

I let you know

hope you understand italian labels

I have the same classification each time I run the inference too.

Let me double check the code especially the resize function to see if anything seems weird…

I’ve just noticed that you are using greyscale when I used RGB.

The issue might comes from there.

I’ll check again later today if I can have better results.

Regards,

Hello @gransasso,

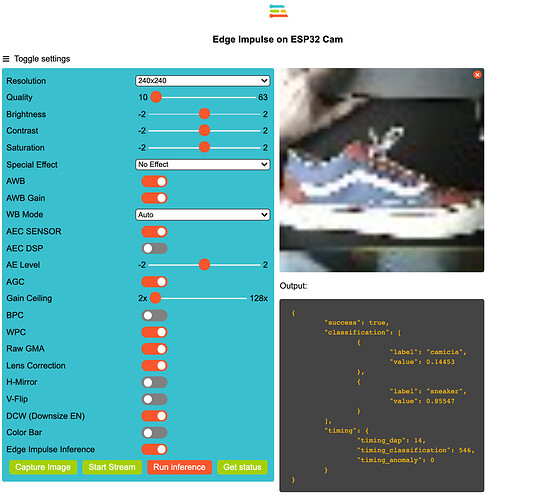

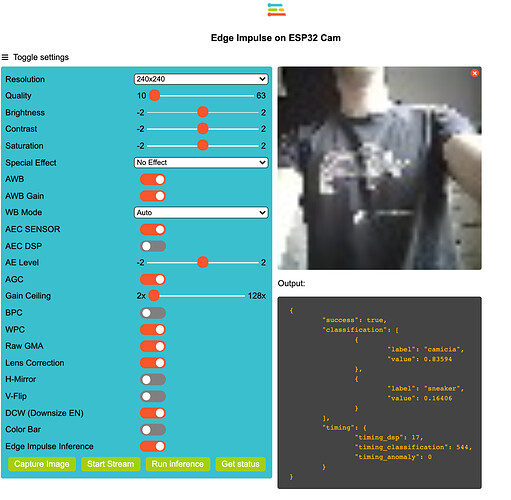

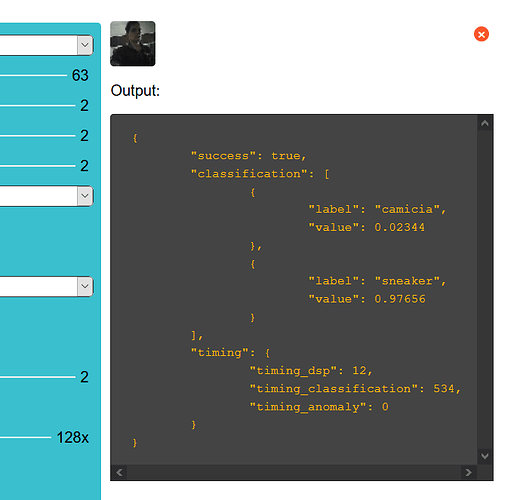

I see that you just fixed your images to RGB:

I just ran your model with my ESP32 cam and it seems to work now:

Let me know if it worked on your side.

Regards,

Louis

really? maybe my esp is broken. I reduced the categories to just two so as to simplify, but I always keep getting the sneaker category with a confidence of at least 85

The size of the image is just a bug in the display, if you use chrome and you reduce the size of the window, the image should get bigger at some point

Can you try to place a sneaker with a dark background and try again? I suspect your model to perform well with the provided images by the fashion mnist database but not really in real conditions…

Also, what you can do to test if you have the same results between your ESP classification and the one on the studio is:

- Save your image

- Upload it on the studio (either with the cli or directly using the online uploader)

- Compare the results

Then you will see if the issue comes from the embedded code or the model.

Regards,

Louis

yeah, i tried with a shirt on a black background and it seems to be better. In fact, I thought from the beginning it could be such a problem.

Thanks

Hey Louis, i have found this on https://www.pyimagesearch.com/2019/02/11/fashion-mnist-with-keras-and-deep-learning/ :

At this point, you are properly wondering if the model we just trained on the Fashion MNIST dataset would be directly applicable to images outside the Fashion MNIST dataset?

The short answer is “No, unfortunately not.”

The longer answer requires a bit of explanation.

To start, keep in mind that the Fashion MNIST dataset is meant to be a drop-in replacement for the MNIST dataset, implying that our images have already been processed.

Each image has been:

- Converted to grayscale.

- Segmented, such that all background pixels are black and all foreground pixels are some gray, non-black pixel intensity.

- Resized to 28×28 pixels.

For real-world fashion and clothing images, you would have to preprocess your data in the same manner as the Fashion MNIST dataset.

And furthermore, even if you could preprocess your dataset in the exact same manner, the model still might not be transferable to real-world images.

So i choose a bad dataset, sorry for wasting your time

it seems I managed to figure out what the problem was.

I used a very small pre-trained model, so I was able to avoid quantizing the model during deployment.

It seems that quantization caused problems in the accuracy of the model after deployment.

@louis @janjongboom & all others, Thanks a lot for this entire thread. I had the same issues and resolved almost everything with your help.

@gransasso Yeah we have some ideas on fixing quantization error on smaller image models (such as using a different dataset to find quantization parameters, and quantization-aware training) that @dansitu is working on.

First, @Louis thanks for the work you shared, for the ESP32 cam!

I’m a teacher and rather new to ML, but I hope to bring ML to my classes.

The ESP32 cam would be a great tool as our budget is rather small and our students are only familiar with the Arduino environment.

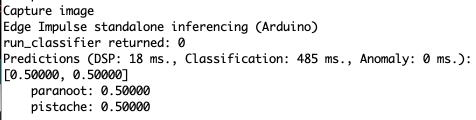

To get familiar with it, I trained a model for classifying nuts as pistachio nuts or as brazil nuts and tried to keep it as small as possible. When I test the model in EI, it gives normal results, but when I run inference on the ESP32 cam, I always get the same result: 0.5000 vs 0.5000

I tried to run the model with both the basic and the advanced image classification example. I tried it on another ESP32 cam, but always with the same result.

Any idea what’s going wrong? Thanks a lot!