Hello

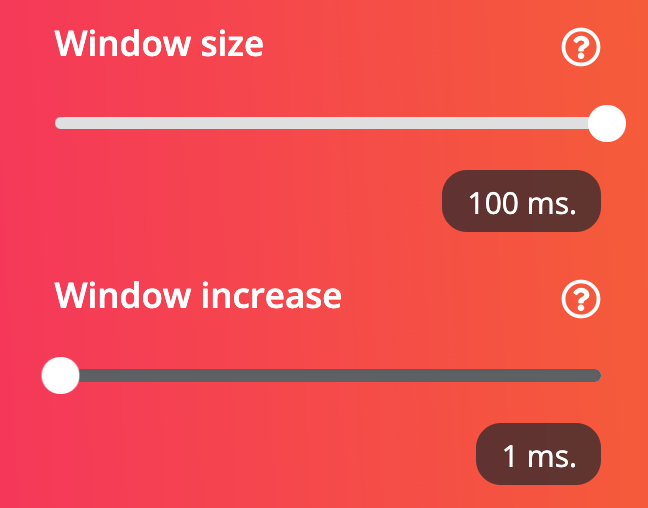

I’m trying to develop a event detection like single tap, double tap, directional tap (left to right, right to left) using EI plataform. Basically I’m sampling data from an accelerometer at 100Hz. I loaded the samples into the EI plataform, split the events I want to classify manually (set time windows of 100ms, so I’ll have 10 samples per window) and then I trained the model. I got a training result of 92% and a test result of 89%. I loaded the model onto my hardware and basically I have a circular buffer being loaded with samples at 100Hz and when an event occurs I center the event in a 10 sample buffer (100ms window) to feed the EI model but not I am getting the desired results.

And here comes my question: visually the accelerometer signal on the EI looks “smooth” when in fact a 100ms window only has 10 samples. Is the EI doing any interpolation on the data before training it?

Cause on hardware I’m not performing any interpolation. I just feed the model with 100ms of information i.e., 10 samples when an event occurs.