Question/Issue:

don’t understand what is the problem with the prediction

Project ID:

147810

Context/Use case:

i am trying to use C++ lib on stm32

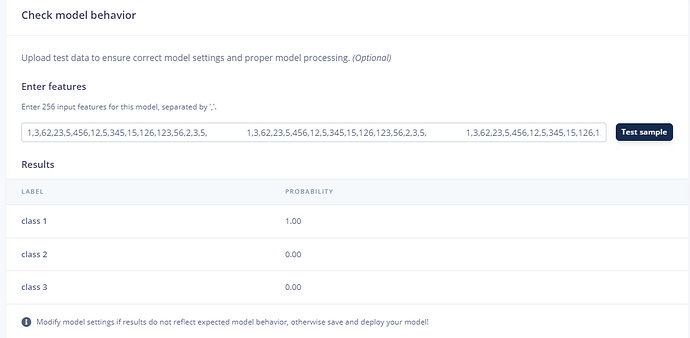

i can run example on the edge impulse site returning class 1 probability is 1

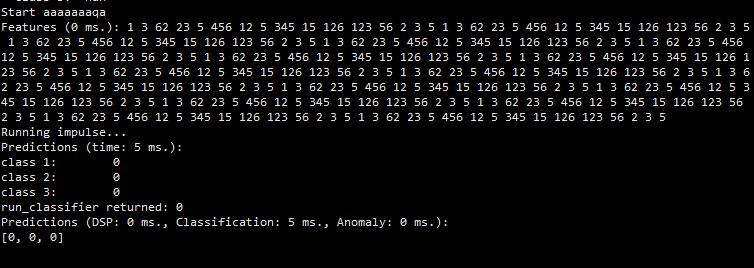

but all zero on the stm32 board

run_classifier return 0 should represent ok for the inference

what is the possible issue?

after a several tries

seems it is the problem of using this setting on tensorflow

is that not supported by edge impulse ? or it is possible to use but need to add some more setting/code?

Hi @uveuvenouve

We dont support 16bit quantisation. Only 8bit and 32bit for now.

fyi @AIWintermuteAI

Tensorflow Lite inferencing engine is more flexible in data types it supports, as compared to Tensorflow Lite Micro - which is what used if you’re deploying to a microcontroller.

One thing I want to advise is to try switching off EON compiler at deployment, so choose Tensorflow Lite option on Deployment in a dropdown menu. This is in case if what you’re seeing is a quirk in model compilation process. Remember to do the clean build afterwards.

If it still shows 0, then no dice - it means that current version of Tensorflow Lite Micro we have in our EI SDK does not support INT8 weights and 16 bit activations schema indeed.