Hi, we have trained a model for classifying audio files. All my training and testing data are 5-second audio clips. The window size we set was 2 seconds and the increment size is 0.1s. we deployed the C++ on our raspberry pi locally and want to classify 5 seconds audio clips using the local model. We are wondering how the audio files are classified on edge impulse. Do we need to go through all 30 windows, classify each of them, and then take the majority of the output to get the overall classification? This seems to be what our model is doing online. If this is not right, what is the model doing online to classify? And how do we classify locally?

We tried running the model locally using this tutorial and we received the error:

bash: ./build/edge-impulse-standalone: Argument list too long@Chloe, how you do the averaging is a bit up to you, we typically smooth out the results a bit to avoid false positives, but it requires a bit of playing around.

Regarding your error, I’ve updated the example and added a troubleshooting section here: https://docs.edgeimpulse.com/docs/running-your-impulse-locally#argument-list-too-long - you’ll need to update the example-standalone-inferencing repository though for this to work. Hope this helps!

Hi Jan @janjongboom , thanks for your reply! I appreciate your help a lot!! I am wondering if there is a specific format for the .txt file? Because I have a .txt file that contains an array of size 16000. But I got an error saying that

“Cannot open file output_cough_4.txt

The size of your ‘features’ array is not correct. Expected 16000 items, but had 0”

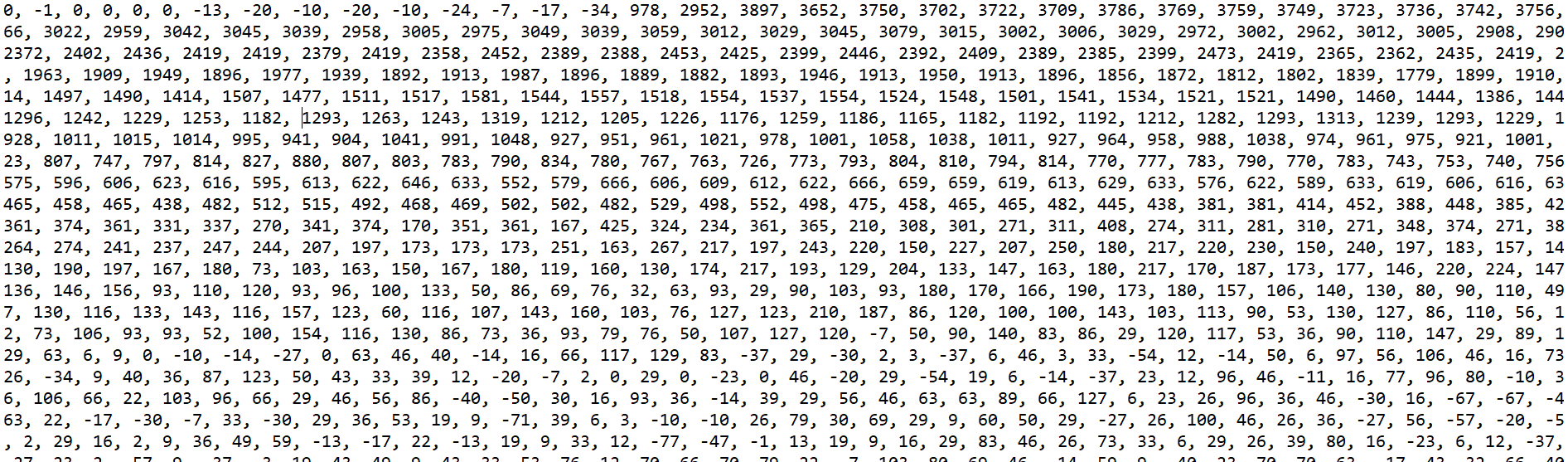

This is how my .txt file looks like. Thanks in advance!

Hi,

Cannot open file output_cough_4.txt

Is output_cough_4.txt in the same folder as where you run the command from? The error indicates that it cannot find this file…

Hi Jan, I realized my problem now. I was in the example-standalone-inferencing directory when running the commands but the .txt is in the build directory. I moved the .txt file in the right directory and it is working now. Thanks so much for your help!