Question/Issue: I am unable to collect microphone data samples through my arduino nano rp2040 connect board: I flashed the rp2040 firmware to the board, and used daemon to connect the board to edge impulse. For the inertial sensor, data is collected just fine. However, when I try to use the on-board microphone, the waveform is blank as data is not being collected from the microphone.

Project ID: 290300

Context/Use case: Speech recognition pipeline implementation

Hi @heeirthan,

The Arduino Nano RP2040 is not an officially supported board. As such, we do not support the microphone for that board out of the box. Our firmware is built for the Raspberry Pi Pico with some external sensors, which you can read about here: Raspberry Pi RP2040 - Edge Impulse Documentation

You can capture audio data, but it will require manually buffering, storing, and uploading audio files on your Arduino Nano RP2040.

Thank you.

I tried cloning a speech recognition project with existing datasets, before running the model. For the normal microphone example, the predictions stay at the same values and it does not seem like the model responds to anything coming in from the microphone. I have seen other projects online in Edge Impulse using the same board for speech recognition, so I am confused as to why it doesn’t work for my board.

I also wanted to know how data is being fed into the model when the model is running. If I can’t get it to work through the on-board mic, I would like to try to use data from an SD-card when running the model.

Hi @heeirthan,

Do you have a link to a project showing the Arduino Nano RP2040 being used for keyword spotting? If it’s this one from Robert John (https://www.youtube.com/watch?v=mIOmSOqs2xY), you might be able to follow his exact steps.

To feed the model with audio data, you will need to buffer slices of raw audio and call run_classifier_continuous() from the Edge Impulse C++ library. This can be tricky, as it often involves setting up DMA and double buffers. I have an example of doing this with a Wio Terminal in Arduino here: https://github.com/ShawnHymel/ei-keyword-spotting/blob/a738973eb63f0818f2e0d420b05bfffe5dde06bb/embedded-demos/arduino/wio-terminal/wio-terminal.ino.

@heeirthan

To get started review the code in this file. Thank you hpssjellis.

-

If you want to use your own microphone, you can see how it is handled on a Nano BLE 33 Sense in file nano_ble33_sense_microphone.ino and adapt to your hardware.

-

Check file static_buffer.ino for how you can load an inference buffer. You can add code to this file to read your SD card and load the buffer.

1 Like

Here is the video: https://www.youtube.com/watch?v=D_krGh5FWCQ&t=1743s

It seems like he is able to collect data directly from the microphone.

1 Like

Hi @heeirthan,

Oh, that worked for me on my Arduino RP2040! I honestly did not know we officially supported the microphone on the Arduino RP2040. Do you have Chrome? Can you try using WebUSB (as shown here: https://edgeimpulse.com/blog/collect-sensor-data-straight-from-your-web-browser) instead of going through the CLI tool to see if that works?

Hi, I tried using WebUSB, but this is my output for mic:

The accelerometer and gyroscope still works just fine…

Hi @heeirthan,

That makes me think your microphone may be bad on that board. Can you try following this tutorial to see if the microphone works? https://docs.arduino.cc/tutorials/nano-rp2040-connect/rp2040-microphone-basics

1 Like

This example works fine with my microphone. I will try to see if I can find another RP2040 board to try this on. Thank you

By the way, i am also using the RP2040 firmware, which should be right as the platform recognizes the board as a nanorp2040 through Web USB

After trying a new board, the Web USB method worked!

However, while trying to run my project in continuous mode, I am getting this message:

Error sample buffer overrun. Decrease the number of slices per model window EI_CLASSIFIER_SLICES_PER_MODEL_WINDOW is currently set to 1

ERR: Failed to record audio…

As you can see, I tried to reduce the number of slices, but still getting the same error

Project ID is 289274.

Thanks

Hi @heeirthan,

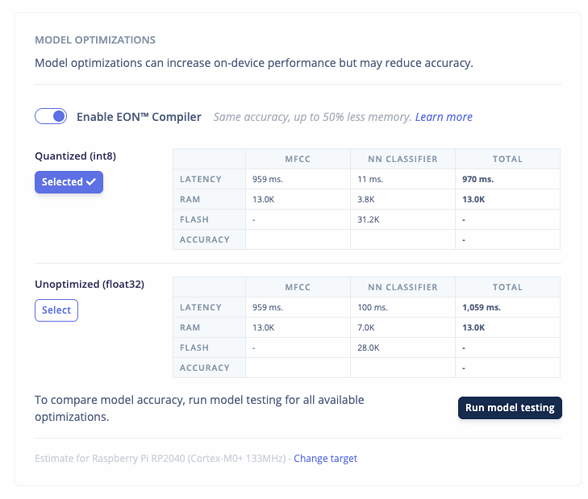

The RP2040 is a simple (dual-core) Arm Cortex-M0+ with no DSP acceleration. As a result, most DSP functions (such as computing MFCCs) are notoriously slow, even though the CPU is running at 133 MHz. If you go to the deployment page, you can see that the MFCC calculations take around 1 sec:

Because your window size is 1 sec, you will find that it will take longer to perform DSP+inference than you can capture new data. As a result, you will overrun your feature buffer (i.e. you’re trying to collect new data when the old data has not been fully processed yet). There are a few options to remedy this situation:

- Use a smaller window to prevent the overrun

- Try a simpler processing/DSP section (such as MFE) to see if that will meet your needs

- Switch to a processor with DSP acceleration, such as an Arm Cortex-M4f

Hope that helps!

1 Like